No cigarettes. Avoid the desert and the ocean. Don’t describe the body unless it’s in pain or at a crime scene. Use “I’d” instead of “I would.” If your heroine gulps, trembles and jerks, consider having her assertively run, shoot, and eat. (Note to Hamlet: “Hesitation doesn’t keep pages turning.”) Focus on human closeness, throw in some modern technology, and even if it sounds counter-intuitive, remember: we’d rather read about taxes than revolutions.

These are tips I’ve gleaned from The Bestseller Code, a book of criticism by Jodie Archer and Matthew L. Jockers, who used a computer to churn through 20,000 novels published in the past three decades for themes, plots, styles, and characters common to commercially successful fiction. The authors—Archer is a Penguin editor, Jockers is an associate professor of English—claim that their eponymous algorithm can forecast future bestsellers with up to 90 percent accuracy.

The project is part of a broad movement in literary studies called “distant reading.” Developed by Franco Moretti at Stanford University, where Archer did her PhD, distant reading is an inversion of traditional close reading, in which the critic exhaustively dissects a particular text. By harnessing computer technology, distant reading analyzes literature in ways that only computers can: as “big data,” the stuff of statistics. Literary features are traced over tens of thousands of books, more than any human could read in a lifetime, in order to expand the range of study beyond a narrowly defined canon. For example, an ongoing Stanford project maps the experience of suspense over scores of books published since 1750. Researchers hope to pinpoint what formal features caused the sensation of suspense, and to observe how those features—and that sensation—changed across time. To understand such underlying patterns in literature, says Moretti, we have to stop actually reading books.

Bestsellers are ideal for distant reading, and not simply because closely reading John Grisham’s corpus sounds like one of the torments of hell. We all sense that bestsellers are predicated on a formula, but something about the novel form—its length, its intricacy, its dynamic interaction with the reader’s life experience—has made that formula difficult to discern. For Leonard Woolf, it required “a touch of naïvety, a touch of sentimentality, the story-telling gift, and a mysterious sympathy with the day-dreams of ordinary people.” But whatever that “mysterious sympathy” is, even seasoned editors struggle to spot it, as evidenced by the twelve rejections J.K. Rowling received for Harry Potter and the Philosopher’s Stone. By comparison, a three-minute hit song is easy to identify—as Prince said, “If it feels good, cool.”

“Only a person with a Best Seller mind can write Best Sellers,” according to Aldous Huxley. No doubt he was being contemptuous, but he wasn’t wrong: despite the diversity of genre on the bestseller list, there really are a series of habits shared by top-selling authors. Indeed, according to Archer and Jockers, bestsellers “have so many latent things in common that they are practically a specialized genre in themselves.” Run through the algorithm, books as superficially dissimilar as The Da Vinci Code and Fifty Shades of Grey are shown to be manipulating readers in lock-step formation.

Bestsellers exhibit “shorter, cleaner sentences, without unneeded words,” and vastly favour periods and question marks to colons and semicolons. “Okay” and “Ugh” are tops; so is “thing,” which appears six times more often in bestselling books. Best-selling characters, according to the code, “have something magnetic about them that makes them stand out from the crowd.” Mitch McDeere in John Grisham’s The Firm (1991) is singled out as exemplary: “handsome, Harvard trained, a relentless thinker and workaholic, and he doesn’t need sleep.” Winning characters “need” and “want” twice as often as the “wishers, supposers, or yawners” of losing prose (I’m looking at you, Bartleby). “There is something altogether more attractive about a character whose body makes more simple and controlled gestures,” the authors write. “Readers want someone to be not to seem. They want someone to do not to wait.”

It’s difficult to know how seriously to take all this. Archer and Jockers have great expectations for their algorithm, which they believe could keep the publishing industry “not just running but diverse.” Their methodology, however, may make that hard. For its data pool, The Bestseller Code uses the New York Times bestseller list—a notoriously slippery record. While it’s received as a reflection of the top-selling books in America, in reality the Times list is an idiosyncratic construct, so much so that it’s legally considered editorial content, not objective fact. In its analysis of what goes on and off the list, The Bestseller Code may have cracked that particular list’s mercurial preferences, rather than the secret to successful novels in general.

That said, success on the Times list is, in many ways, success in general. A generation ago, the critic Richard Ohmann famously studied the profound role the New York Times plays in creating the canon of American literature. In Ohmann’s analysis, the Sunday Book Review—with its influential trinity of reviews, advertisements, and bestseller lists—creates “a nearly closed circle of marketing and consumption.”

The Bestseller Code appears to be trapped inside that very circle. Take the following statement from the book’s analysis of bestselling themes and topics:

Clearly there is something compelling and significant in the fact that a computer read several thousand contemporary novels, equally blind to the status of every author, and picked Danielle Steel and John Grisham as two authors most likely to succeed based on theme and topic.

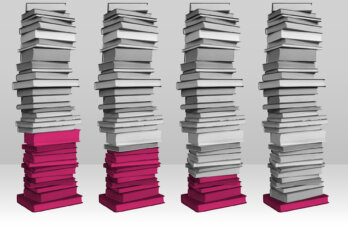

It’s genuinely impressive that computers can read on the level of theme and topic, but just a moment before, the authors describe the illustrious history of Steel and Grisham on the bestseller list. A single Steel book charted for a record 381 weeks, while Grisham once occupied the list’s top four spots at the same time. If the code is meant to detect whether a book conforms to the tastes of the list, then how can it be compelling or significant that Steel and Grisham aced the test? I’m reminded of Nietzsche’s complaint, that “If I create the definition of a mammal and then, having inspected a camel, declare: Behold, a mammal,” then nothing has been truly brought to light. If Steel has defined the bestseller, and then matches that definition, what has really been illuminated?

While Archer and Jockers hope their algorithm will persuade publishers “to use more of their Patterson/King/Steel budget on the young writers who may one day replace them,” the notion that an algorithm will lead to greater diversity is wishful thinking. Not only are bestsellers categorically homogeneous, as the authors themselves argue, but the code is founded on the data of yesterday’s bestseller. In other words, it’s designed to predict what looks best in pop culture’s rear view mirror. A publishing house that relied on the algorithm would be beholden to the past. Unless paired with an unscientific gut instinct, the code would only encourage publishers to replace Patterson/King/Steel with authors who basically write like them.

The real story of popular taste—how it violates and shifts the paradigm—is better perceived in the outliers. To learn more about the 10 to 20 percent the code got wrong, I wrote to Jockers. One blockbuster error, he replied, concerned Game of Thrones, which the code thought was a certain non-bestseller. It’s not difficult to see why: the series transgresses virtually every stricture set out in the book. Such outliers throw into relief the Faustian bargain represented by the code. While a publisher using the algorithm might not necessarily have rejected J.K. Rowling, they surely would have rejected George R.R. Martin’s rule-defying series. Perhaps this would lead to a more lucrative industry, but I fail to see how would it lead to a more diverse one.

As for books that weren’t bestsellers, but which the algorithm predicted would be—“That’s a fascinating story that we decided to save for another day,” Jockers told me. I wonder if that story can be told scientifically, or if Archer and Jockers will have to resort to a more subjective literary analysis. For while statistics give the impression of laying bare a hidden process, in the case of literature there’s always another layer, one that returns us to the human subject. What Woolf called mysterious sympathy—a structure of feeling undergirding popular taste—is what finally draws us to certain themes, plots, styles, and characters. That structure isn’t immutable; it’s contingent on the billion chaotic factors that comprise human experience.

While they’ve set the standard of the modern bestseller, Steel, Grisham, and Brown should eventually be displaced, not enshrined, which seems like the fated outcome of relying on quantitative analysis. Novelty—ultimately the root of the novel form—arrives against the grain of patterns. It comes to us through intuition, divination, and accident. It upends data. Computers can tell us remarkable things about the tastes of yesterday. They shouldn’t tell us what to read tomorrow.